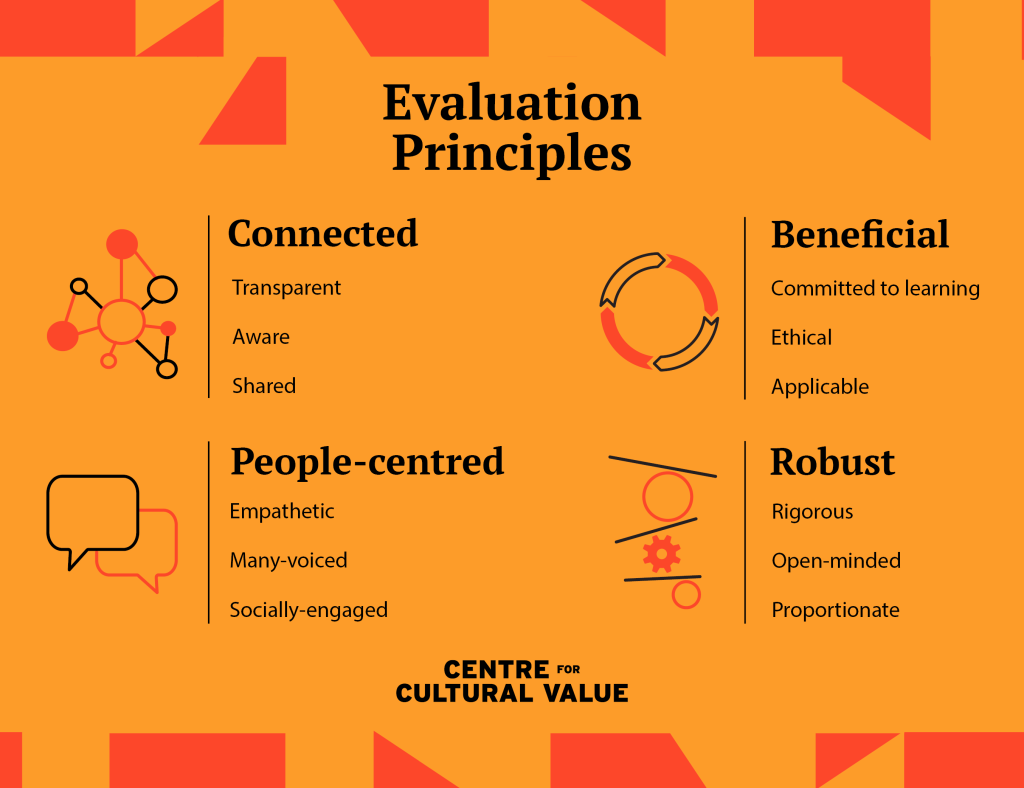

Evaluation Principles

We want to build a shared understanding of the differences that arts, culture, heritage and screen make to people’s lives and to society.

These collaboratively produced Evaluation Principles provide a framework to shape how evaluation is carried out and used in the cultural sector.

Introduction

How do we make our evaluation meaningful? How do we prioritise learning over monitoring and foreground understanding over judgement?

In 2021, the Centre responded to growing demand within the arts, culture and heritage sector for support with evaluation. The result was our co-created Evaluation Principles.

The Principles are not designed as a step-by-step guide to evaluation. With inevitable limits in time and resources, it will never be possible to evenly apply all of the Principles all of the time.

Instead, the Principles are meant to help ground and guide your evaluation practice, supporting you and your stakeholders to set priorities, engage the right people and use appropriate methods to understand the holistic impact of your work.

Explore this page to find a range of resources and prompts to help you carry out evaluation across a range of cultural activities and contexts.

Online evaluation skills training

Our new, free-to-access online course is designed to build your evaluation skills and help you apply the Principles to your work.

Evaluation Principles in practice podcast

Our latest podcast series delves into the challenges of evaluation and asks how we can use the Evaluation Principles in practical ways.

Get more comfortable talking about and learning from failure with these range of resources.

Beneficial

How do we make sure our evaluation addresses our own needs and those of our stakeholders?

Robust

Are our evaluation approaches and methods appropriate, rigorous and geared towards learning?

People-centered

How do we consider a diversity of viewpoints and experiences in order to gain better insights?

Connected

Does our evaluation enable learning with and through one another in a shared and effective way?

Why and how were the Principles created?

We talked to lots of people working in the cultural sector about what they would find useful. A primary request was for a sharing of ideas to inform how evaluation is carried out and used within the sector.

In 2021, we developed the Evaluation Principles with a working group of over 40 representatives from across the sector. The group members cover a range of roles and perspectives on evaluation (including those who do it, who use it and whose work is evaluated).

Read more about the global context behind the principles, and the key ideas and current challenges that informed their development.

The work was led by Dr Beatriz Garcia from the University of Liverpool and Oliver Mantell from The Audience Agency.

What do you think?

We’d love to hear your thoughts about the Evaluation Principles. Please let us know if you have any feedback, including how you’ve found applying them to your work.

You can contact us at: ccv@leeds.ac.uk